[ Back ]

How GPT learns layer by layer

Kaichun Qiao, Jason Du, Kelly Hong, Alishba Imran, Erfan Jahanparast, Mehdi Khfifi

arXiv preprint arXiv:2501.07108 · 2025

Abstract

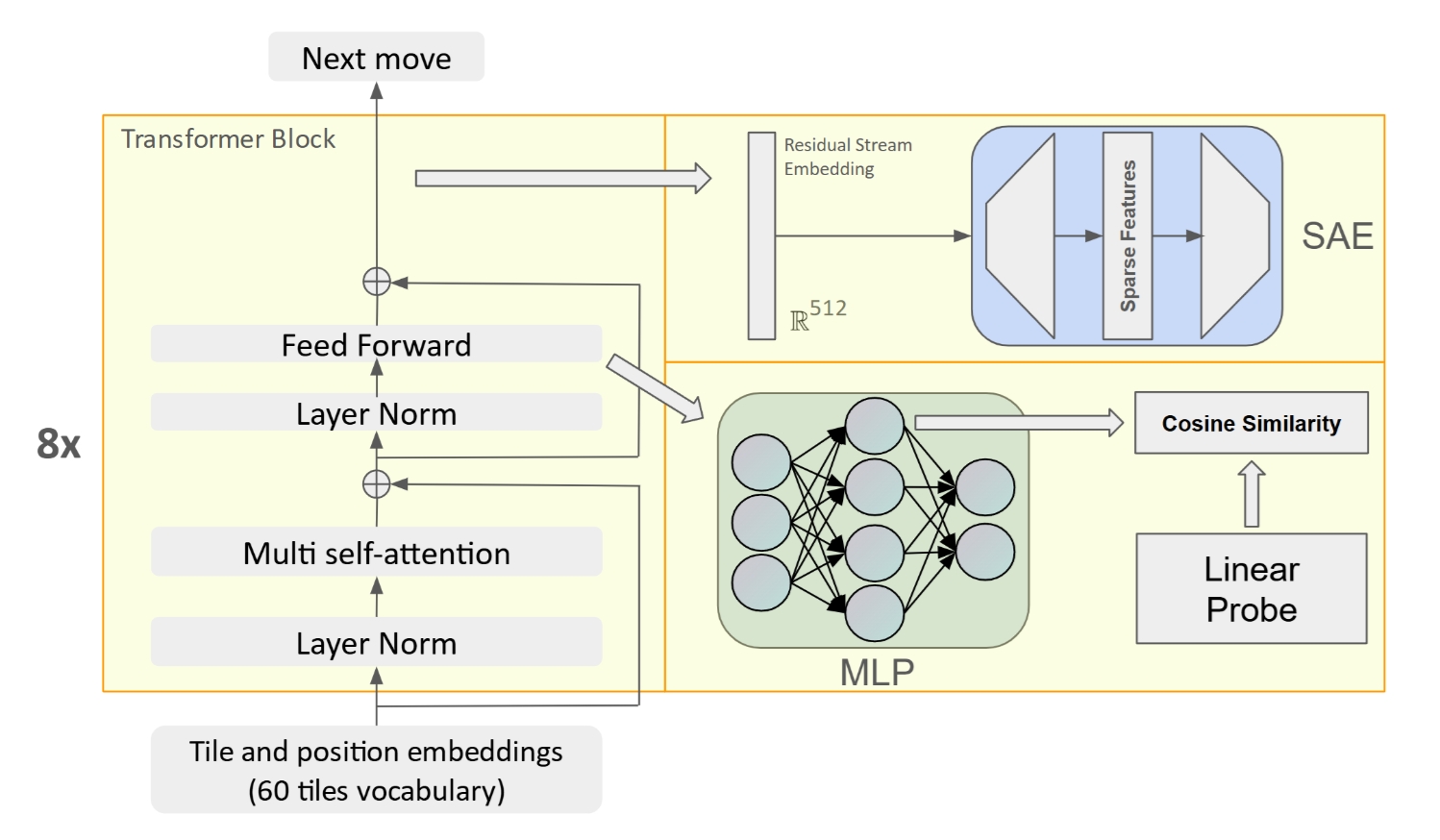

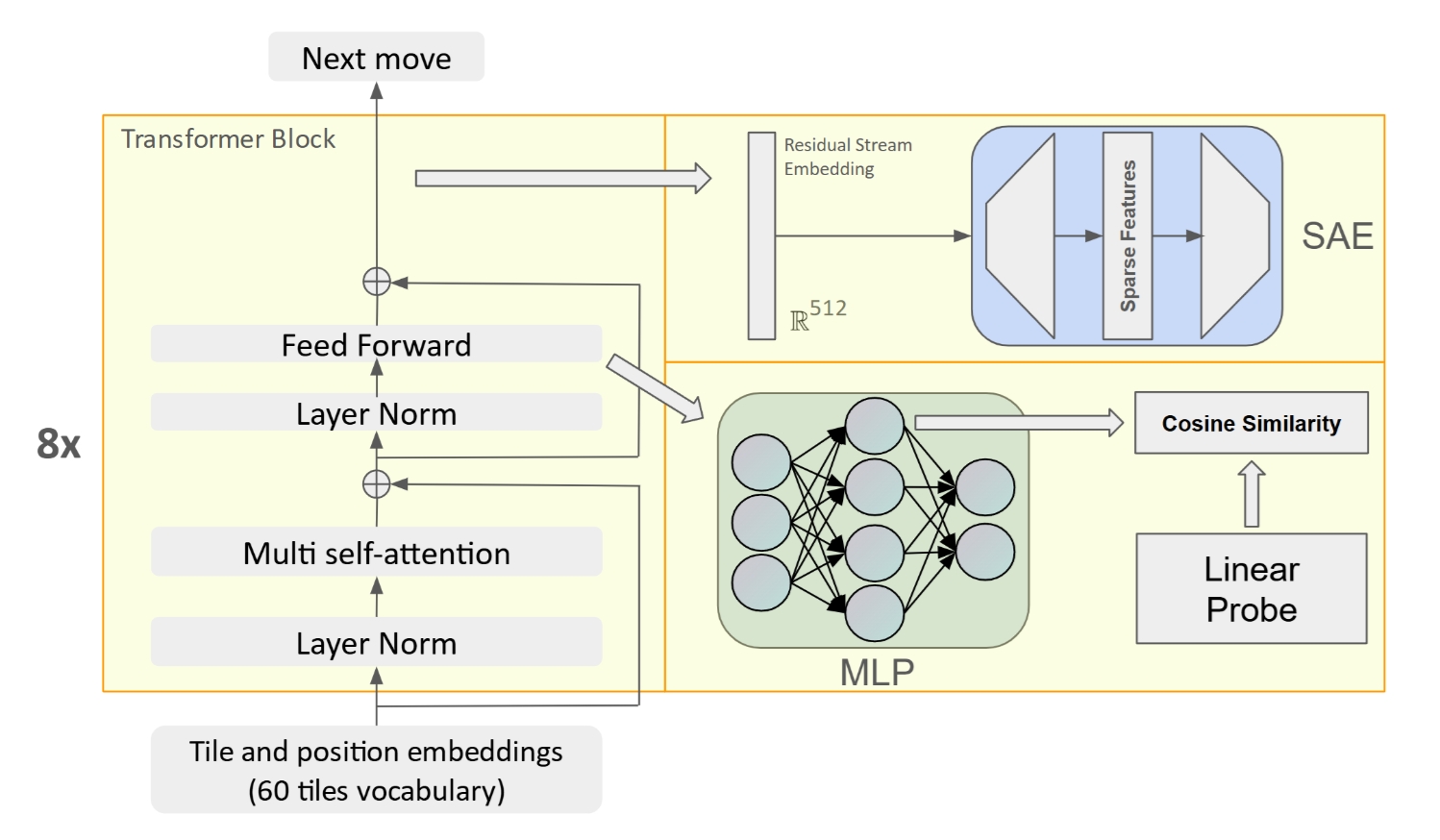

We study how OthelloGPT builds internal representations layer by layer under next-token training. By comparing sparse autoencoders with linear probes, we analyze how the model progresses from simple board structure to dynamic gameplay concepts such as tile color and stability.

Imported from Google Scholar and refined for the site profile page.